Master AI Image Generator Prompts: The 2026 Framework for Pro Visuals

To master AI image generator prompts and the 2026 frame […]

Resumen rápido

- To master AI image generator prompts and the 2026 frame

- To master AI image generator prompts and the 2026 framework for pro visuals, you need to shift from using vague descriptions to a technical Six-Element Framework: Subject, Environment, Style, Lighting, Composi.

- The Six-Element Prompt Framework: A 2026 Standard for Pro Visuals

Proceso editorial

Revisado por SectoJoy y publicado el 7 de mayo de 2026. Actualizamos este artículo cuando cambian los detalles del producto, los ejemplos o la guía de la herramienta. Última actualización: 7 de mayo de 2026.

SectoJoy

Soy un desarrollador independiente que crea aplicaciones iOS y web, enfocado en productos SaaS prácticos. Me especializo en SEO con IA, explorando constantemente cómo las tecnologías inteligentes pueden impulsar el crecimiento sostenible y la eficiencia.

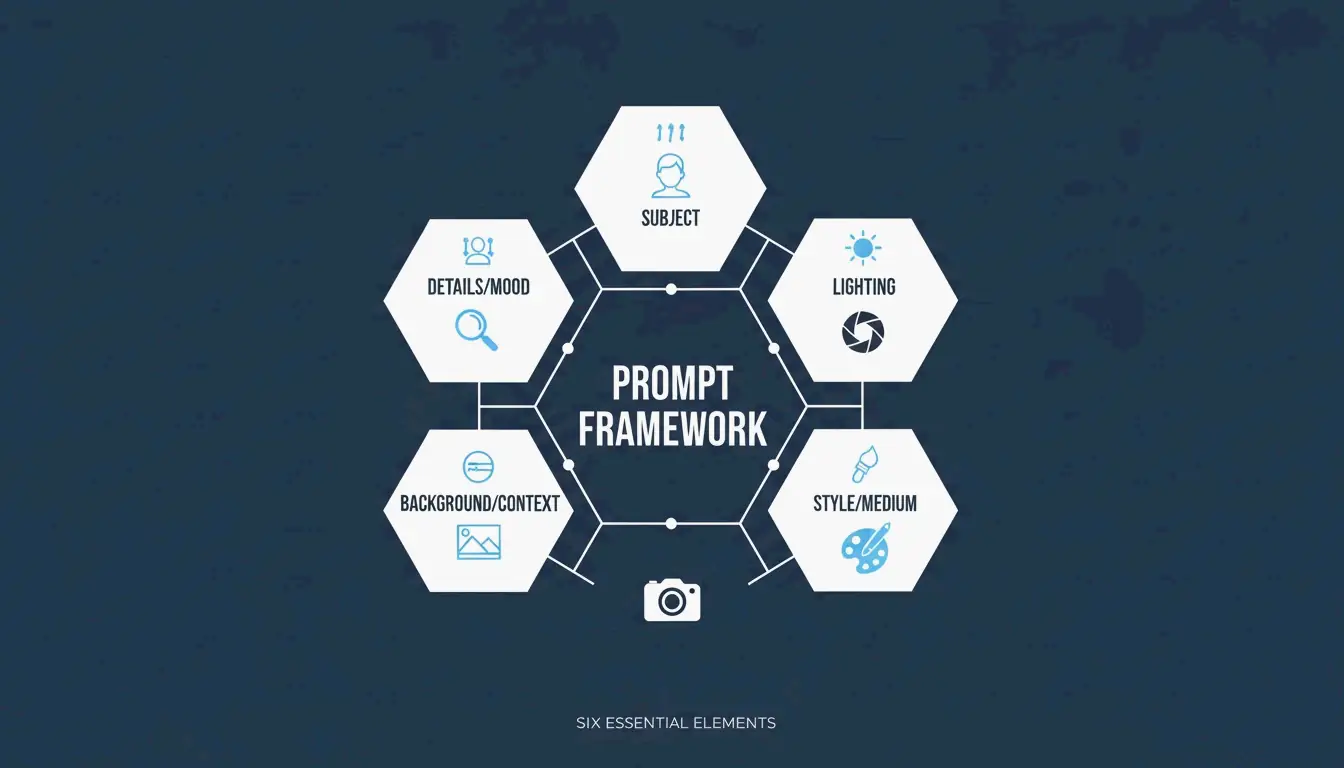

To master AI image generator prompts and the 2026 framework for pro visuals, you need to shift from using vague descriptions to a technical Six-Element Framework: Subject, Environment, Style, Lighting, Composition, and Quality Modifiers. By using next-gen tools like GPT Image 2 for text and Nano Banana 2 for 4K realism, creators can produce studio-grade results through consistent refinement and detailed, context-heavy instructions.

The Six-Element Prompt Framework: A 2026 Standard for Pro Visuals

In today’s digital landscape, high-end visuals aren’t the result of a lucky guess—they come from structured engineering. The goal is to stop “describing” and start “instructing.” Data from Adobe shows that by 2025, 67% of marketing teams had already built AI generation into their daily workflows, making prompt engineering a foundational professional skill.

The Six-Element Prompt Framework has become the industry benchmark. It ensures every part of an image is a deliberate choice rather than a random AI output:

- Subject: Be specific about the main focus (e.g., “a slim silver laptop open at a 90-degree angle” works better than just “a laptop”).

- Environment: Define the background or setting to ground the scene.

- Style: Name the specific medium, like “editorial photography,” “flat illustration,” or “3D render.”

- Lighting: Direct the light. Try “soft natural window light from the left” to set the mood.

- Composition: Act like a camera operator using terms such as “wide angle,” “eye-level perspective,” or “shallow depth of field.”

- Quality Modifiers: Use technical tags like “4K,” “ultra-realistic,” or “high-fidelity” to set the bar.

Technical Specs: Why Precision Trumps Adjectives in 2026

Words like “stunning” or “beautiful” don’t actually tell an AI model what to do. In 2026, professional visuals depend on technical parameters. If you specify a “50mm lens” or “DSLR-style photography,” you force the AI to mimic real-world physics, including natural background blur (bokeh). According to the ImagineArt Guide, controlling the lighting is the single most effective way to move from a “fake AI look” to a professional photograph.

Case Study: Achieving 75% Cost Reduction in E-commerce Production

This framework isn’t just about aesthetics; it’s changing the economy of content. As reported by Pixazo, one e-commerce platform used Seedream 4.5 and 5.0 to generate over 10,000 product images every month. By swapping traditional photoshoots—which typically cost between $2,000 and $10,000—for structured AI prompting, the company cut creative costs by 75% and brought products to market much faster.

GPT Image 2: Mastering Typography and Complex Instruction Following

GPT Image 2 is a major breakthrough for 2026 because it can handle layered instructions and render perfect text within an image. Earlier models often struggled with “gibberish” letters, but GPT Image 2 follows specific typographic rules. To get it right, pros put the text they want in “QUOTES” and specify fonts like “bold sans-serif” or “thin serif.”

The ‘2K Reliability Boundary’: Managing Resolution Constraints

When working with GPT Image 2, technical precision applies to file size too. The model is flexible, but your dimensions should always be a multiple of 16. While it can target 4K (3840×2160), OpenAI’s own documentation suggests treating anything over 2560×1440 (2K) as an “experimental boundary.” For the most consistent textures and logic, staying within the 2K limit is usually the safer bet for production.

Prompting for Brand Fonts and Layout Hierarchies

GPT Image 2 is built for “Context-Rich Prompts.” Instead of just describing what the image looks like, tell the AI what it’s for. IndianPrompt recommends framing prompts like this: “Generate a professional image for a blog article… the mood should be optimistic.” This helps the model choose color palettes and layouts that fit professional design standards automatically.

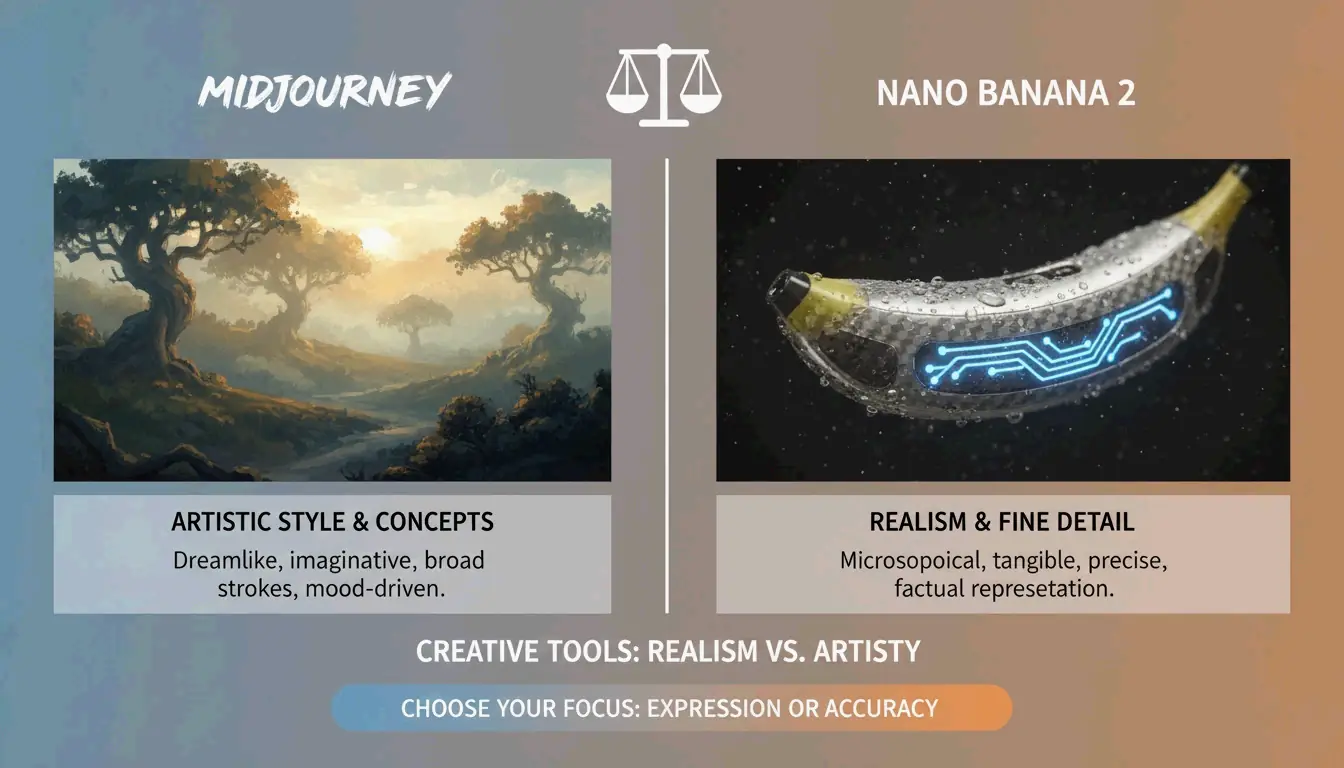

Nano Banana 2 and Flux 1.1 Pro: Pushing the Limits of Photorealism

If your goal is absolute photographic “truth,” Nano Banana 2 (Google’s Gemini 3 Pro Image) is the 2026 leader for micro-textures. It can render skin pores, fabric weaves, and aged materials at a 4K level that rivals a real studio. AIMLAPI notes it is currently the most detailed model for architecture and product shots.

Using Flux 1.1 Pro for Natural Lighting and Developer Pipelines

Flux 1.1 Pro has become a favorite for developers because it excels at simulating natural light. It handles how light bounces and where shadows fall more realistically than models focused purely on “art.” For high-volume projects, Flux 1.1 Pro offers a great balance of quality and cost, especially when consistent lighting is a priority.

When to Choose Midjourney for Artistic ‘Mood’ vs. Photorealistic ‘Truth’

Even with the rise of technical models, Midjourney still holds a 26.8% market share in 2026, according to Prodia. Midjourney is the go-to when you need an “artistic vibe” or atmospheric imagery rather than a literal, technical document. It’s perfect for abstract concepts and editorial styles where the “feeling” of the image matters most.

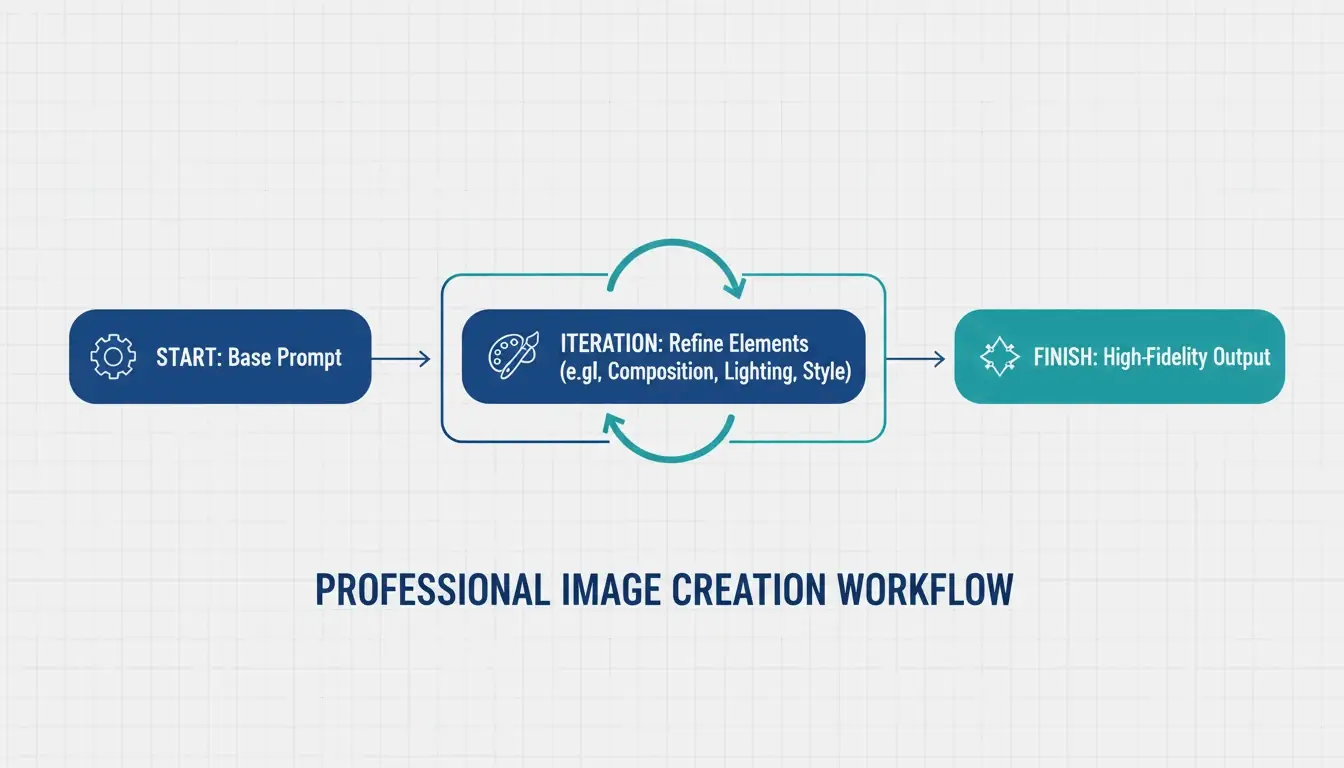

Advanced Techniques: Iterative Refinement and Multimodal Prep

Professional AI images are rarely perfect on the first try. The 3-5 step refinement loop is now the industry standard. Creators start with a base prompt to get the composition right, then use follow-up commands like “change only the jacket color, keep the face identical.” ImagineArt points out that restating “invariants”—the things that should not change—is the best way to keep the AI from drifting off track.

Using Negative Prompts to Eliminate 2026-Specific Artifacts

Negative prompting is still a key tool for quality control. By telling the AI what to leave out—like “extra fingers,” “text overlays,” or that generic “stock photo aesthetic”—you can clean up the final result. In 2026, this is especially useful for avoiding the “over-smoothed” plastic look that sometimes pops up in high-saturation images.

Preparing Visuals for Animation: The Image-to-Video Pipeline

A major trend this year is prepping static images for video tools like Kling or Grok. When generating pro visuals, creators now optimize for “Image-to-Video” (I2V) readiness. This means ensuring high-resolution keyframes and consistent features so the AI can animate the scene without glitches, turning a still photo into a seamless motion clip.

Specialized Workflows: From Vector SVGs to Brand Consistency

For designers who need scalable files, Recraft V4 is the standout tool in 2026. It’s the only major model that outputs true SVG (scalable vector) files. According to AIMLAPI, its native brand kit support allows you to upload your own color palettes and logos, ensuring every generation fits your company’s specific design language.

Maintaining Character Consistency and Legal Compliance

Tools like Midjourney and Nano Banana 2 now offer “Character Reference” (Cref) tags, allowing the same character to appear in different scenes. This is a massive win for brand storytelling. On the legal side, things have become much clearer; Adobe Firefly, with over 6.5 billion visuals created, remains the top choice for big business because it is trained on licensed content and offers commercial protection that open-source models don’t.

Conclusion

By 2026, professional AI imagery has moved from creative guesswork to a structured engineering science. To get the best results, use the Six-Element Framework: rely on GPT Image 2 for complex layouts and typography, and switch to Nano Banana 2 for high-end textures. Remember that the process is iterative—expect to go through 3 to 5 cycles to polish the details and ensure brand alignment. Mastering these technical instructions turns AI from a simple tool into a high-performance digital studio.

FAQ

Which AI image generator is best for rendering clear text in 2026?

GPT Image 2 is the current industry leader for typography, according to AIMLAPI. It follows complex layout instructions better than Nano Banana 2 or Midjourney. For the best results, place the text in quotes and specify font style and placement within your prompt.

Can I use AI-generated images for commercial marketing purposes under 2026 policies?

Yes, but it depends on the tool’s license. Enterprise tiers of GPT Image 2 and Adobe Firefly generally allow commercial use. According to Prodia, Adobe Firefly is particularly noted for being commercially safe as it is trained on licensed content. Always check the latest May 2026 updates on AI disclosure requirements.

How do I maintain character consistency across multiple generated scenes?

Use ‘Character Reference’ (Cref) tags available in Midjourney and Nano Banana 2. Additionally, creating a ‘Character Seed’ prompt that defines fixed physical traits—such as age, hair color, and clothing—helps. As ImagineArt suggests, utilize iterative refinement to adjust backgrounds while keeping the subject static.

What are the recommended 2K and 4K resolution settings for GPT Image 2?

For 2K, use 2560×1440; for 4K, you can target 3840×2160. However, OpenAI’s Cookbook notes that for GPT Image 2, you should always ensure dimensions are multiples of 16 and treat the 3840px cap as an experimental upper boundary for single-pass generation.

Preguntas frecuentes

Which AI image generator is best for rendering clear text in 2026?

GPT Image 2 is the current industry leader for typography, according to AIMLAPI. It follows complex layout instructions better than Nano Banana 2 or Midjourney. For the best results, place the text in quotes and specify font style and placement within your prompt.

Can I use AI-generated images for commercial marketing purposes under 2026 policies?

Yes, but it depends on the tool’s license. Enterprise tiers of GPT Image 2 and Adobe Firefly generally allow commercial use. According to Prodia, Adobe Firefly is particularly noted for being commercially safe as it is trained on licensed content. Always check the latest May 2026 updates on AI disclosure requirements.

How do I maintain character consistency across multiple generated scenes?

Use ‘Character Reference’ (Cref) tags available in Midjourney and Nano Banana 2. Additionally, creating a ‘Character Seed’ prompt that defines fixed physical traits—such as age, hair color, and clothing—helps. As ImagineArt suggests, utilize iterative refinement to adjust backgrounds while keeping the subject static.

What are the recommended 2K and 4K resolution settings for GPT Image 2?

For 2K, use 2560×1440; for 4K, you can target 3840×2160. However, OpenAI’s Cookbook notes that for GPT Image 2, you should always ensure dimensions are multiples of 16 and treat the 3840px cap as an experimental upper boundary for single-pass generation.

Artículos Relacionados

QR Code Generator Create Custom Scannable Links in Minutes

What are the Uses of a Barcode Generator for You?